Artificial intelligence is becoming part of everyday clinical workflows, especially in mental health documentation. In psychiatry, accuracy, nuance, and privacy are not optional. They are foundational. Any system that generates clinical notes must operate with a high level of responsibility, transparency, and clinical alignment.

PMHScribe was built with this in mind. Rather than treating AI as a shortcut, the system is structured around a responsible AI framework aligned with the National Institute of Standards and Technology Artificial Intelligence Risk Management Framework (NIST AI RMF 1.0). This framework provides a practical way to manage risk while supporting innovation and efficiency in healthcare.

This article explains how responsible AI is implemented in PMHScribe, how user roles are structured, and how the system addresses the unique risks of AI in psychiatry.

What is Responsible AI in PMHScribe?

- Ensures clinical accuracy through provider review

- Limits access based on user role and scope of practice

- Reduces bias through structured prompts and human oversight

- Allows full editing, re-running, and verification of notes

- Tracks changes and feedback to improve performance over time

Understanding Responsible AI in a Clinical Context

The NIST AI RMF defines AI systems as technologies that generate predictions, recommendations, or decisions that influence real-world outcomes. In healthcare, those outcomes directly affect patient care, provider liability, and regulatory compliance.

The framework emphasizes that AI risk management is essential to building trustworthy, safe, and human-values-aligned systems. It also highlights that AI risks are not purely technical. They arise from the interaction between systems, clinicians, patients, and real-world environments.

For a psychiatric scribe, this means the system must be designed not just to generate notes, but to support clinical judgment without replacing it.

Role-Based Design in PMHScribe

A core part of responsible AI is ensuring that the right information reaches the right user. PMHScribe enforces this through clearly defined user roles that align with the scope of practice and workflow needs.

Psychiatry-focused clinicians such as psychiatrists, psychiatric mental health nurse practitioners, and psychiatric associates receive content tailored to psychiatric documentation, medication education, orders, evaluation and management decision tools. The psychiatry software users are validated prescribing clinicians.

Counselors and therapists receive documentation structured around psychotherapy workflows. The system adapts language, structure, and content to reflect therapy sessions rather than medication management visits.

Administrative assistants within group practices have a limited but essential role. They are given read-only access to clinical notes. They cannot modify documentation, but they can generate supporting materials such as orders, letters, and prior authorizations for the provider for review and signature.

This separation of roles reduces risk by preventing inappropriate access or modification of clinical content while still supporting operational efficiency.

Human Oversight and Documentation Control

PMHScribe is designed so that clinicians remain fully in control of the final documentation.

Every note can be edited, expanded, or re-run. Clinicians can type directly into the note, add missing details, or regenerate sections if needed. Before a note is finalized, it must be copied into the electronic health record, which introduces an intentional pause for review.

The system also tracks change history. This allows providers to see how a note evolved, identify corrections, and maintain accountability for final documentation.

To support quality assurance, PMHScribe includes a custom Likert scale that allows users to rate the completion and accuracy of their work. Feedback is captured and used to improve future outputs. This creates a continuous learning loop grounded in real clinical use rather than abstract metrics.

Addressing Bias Through Process Design

Bias in AI systems is not only a data problem. It is also a design and workflow problem.

PMHScribe addresses this by incorporating inclusivity experts into the development of prompts and formatting standards. Clinical language is standardized using accepted psychiatric terminology, and outputs are structured to avoid subjective or non-clinical phrasing.

Human oversight remains the primary safeguard. Providers review and validate all outputs, ensuring that patient representation is accurate and appropriate. This combination of structured prompts and clinician review helps reduce the risk of biased or misleading documentation.

Managing Unique AI Risks in Psychiatry

AI in healthcare introduces challenges distinct from those of traditional software. These risks are particularly pronounced in psychiatry, where subtle details carry clinical significance.

One challenge is model drift. Clinical language evolves over time, and patient presentations vary widely. PMHScribe mitigates this by continuously refining prompts and incorporating user feedback, ensuring that outputs remain aligned with current clinical practice.

Bias from training data is another concern. If not addressed, it can affect how patients are described or how symptoms are interpreted. By using structured clinical terminology and requiring clinician review, the system reduces reliance on implicit assumptions.

Detecting errors in complex outputs is also difficult. Psychiatric notes are narrative and nuanced, which makes automated validation challenging. PMHScribe addresses this through transparency. The note is clearly editable, and providers are expected to review and confirm accuracy before finalizing.

Finally, small inaccuracies can have outsized consequences. A missed symptom, incorrect medication detail, or subtle wording issue can affect diagnosis, billing, or treatment planning. The system is designed to surface detailed information from the transcript and avoid summarizing away clinically relevant data.

Key AI Risks in Psychiatry Documentation

- Changes in clinical language over time

- Bias in training data affecting patient representation

- Difficulty identifying subtle errors in narrative notes

- High clinical impact from small inaccuracies

The NIST framework describes AI systems as socio-technical. Outcomes depend not only on the technology, but also on how people use it and interact with it. PMHScribe reflects this by keeping clinicians at the center of the process rather than attempting to automate them out of it.

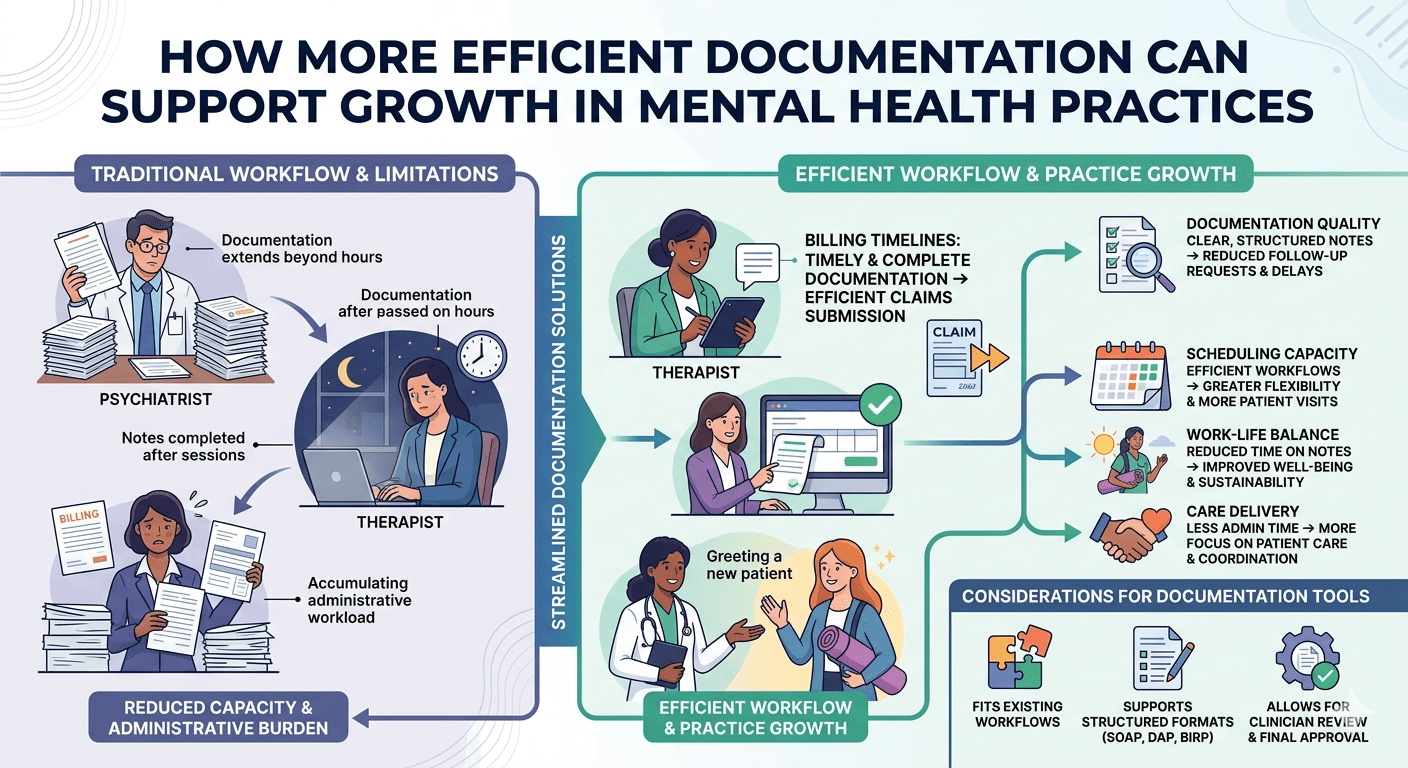

Continuous Risk Management in Practice

Responsible AI is not a one-time implementation. It is an ongoing process.

PMHScribe continuously monitors performance through user feedback, accuracy ratings, and observed error patterns. Updates are made iteratively, with a focus on improving clinical relevance and reducing risk.

The system also supports re-running notes and adjusting outputs as new information becomes available. This flexibility is essential in psychiatry, where understanding often develops over the course of a session.

By combining structured governance, role-based access, human oversight, and continuous improvement, PMHScribe operationalizes the core principles of the NIST AI RMF in a real clinical environment.

Frequently Asked Questions

How does PMHScribe prevent AI errors?

All notes require provider review, can be edited or re-run, and must be finalized by the clinician before use.

Who can access and modify notes?

Only licensed providers can edit notes. Administrative users have read-only access but can generate supporting documents for provider approval.

How is bias reduced in the system?

Through structured clinical language, inclusivity-informed prompt design, and required human oversight.

Conclusion

AI can improve efficiency in psychiatric documentation, but only if it is implemented responsibly. The risks are real, and the stakes are high.

PMHScribe demonstrates that responsible AI is achievable when systems are designed around clinical workflows, clear user roles, and continuous oversight. By aligning with the NIST AI Risk Management Framework, the platform ensures that innovation does not come at the expense of accuracy, safety, or trust.

References

NIST AI 100-1: Artificial Intelligence Risk Management Framework (AI RMF 1.0), National Institute of Standards and Technology, 2023.

PMHScribe aligns its internal processes with the NIST AI Risk Management Framework; however, NIST does not certify or endorse individual AI systems or organizations.